Introduction

Ever felt like the tech world is moving a mile a minute, leaving the rest of us scrambling to keep up? Well, the rise of federated learning is one of those moments where innovation leaps forward—yet most folks aren’t entirely sure how it works, let alone how to keep it safe. At first glance, federated learning looks like a clever workaround for privacy issues: instead of sending raw data to a central server, devices train models locally and only share the model updates. Sounds foolproof, right?

Not so fast! While federated learning can dramatically improve privacy, it doesn’t magically solve every security challenge. Attackers, being the persistent troublemakers they are, can target the model updates, exploit communication channels, or poison the training process. That’s where Federated learning model security steps in—a growing field that aims to reinforce this fancy distributed learning approach with the protection it deserves.

In this detailed and easygoing guide, we’ll break down the ins and outs of Federated learning model security, explore the threats lurking around the corner, and outline practical ways to arm your federated system. Buckle up—there’s more to this than meets the eye!

What Is Federated Learning, Really?

Before diving headfirst into the security weeds, let’s make sure we’re all on the same page.

Federated learning is a machine learning setup where the model is trained locally on edge devices—like smartphones, wearables, or IoT gadgets—and only the learned parameters (or gradients) are sent back to the server. No raw data changes hands. Pretty neat!

In simple terms:

-

Your device gets a copy of the model.

-

It fine-tunes the model with your private data.

-

Instead of sharing the data itself, it sends back only the tweaks.

-

The server aggregates thousands (or millions) of updates.

-

Voilà! A smarter, better-trained global model emerges.

Sounds like a privacy win? Absolutely. Is it a security win by default? Unfortunately… not so much.

Understanding Federated Learning Model Security

So what exactly is Federated learning model security? At its core, it’s the set of methods, protocols, and defensive strategies designed to:

-

Protect the data used in local training

-

Secure the communication channels between devices and servers

-

Prevent malicious actors from poisoning or reverse-engineering the model

-

Ensure integrity and trustworthiness across decentralised systems

Federated learning shifts risk away from centralised data pools—but it also introduces new attack surfaces. That’s why the concept of Federated learning model security has become so crucial.

Why Federated Learning Needs Serious Security

If data never leaves your device, why worry? Well, because attackers aren’t picky—they go after whatever’s vulnerable.

Here’s a quick look at why breaches can still happen:

-

Model updates can leak information.

Believe it or not, gradients can reveal surprisingly detailed clues about the training data. -

Poisoning attacks are a thing.

A single compromised device can subtly poison the model and tank its performance. -

Communication channels can be intercepted.

Even encrypted connections aren’t immune to sophisticated snooping. -

Federated systems scale like crazy.

And with more devices comes more potential entry points for attackers to exploit.

In short, federated learning isn’t invincible—it just shifts the battlefield.

Top Threats in Federated Learning Model Security

Let’s unravel some of the most common threats haunting federated learning ecosystems. Spoiler alert: some of them are sneakier than you’d expect.

1. Model Inversion Attacks

Ever had someone piece together an entire story just from hearing the ending? Model inversion attacks pull off a similar trick. By analysing the gradients sent to the server, attackers can reconstruct parts of the original training data.

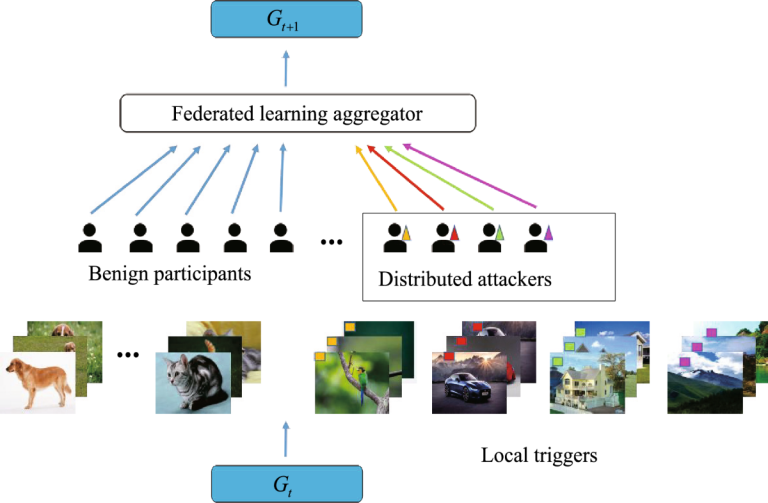

2. Data Poisoning Attacks

Bad actors don’t always need a full-scale breach—they can quietly tamper with training data or model updates on compromised devices. Suddenly, the global model starts making biased or incorrect predictions.

Common poisoning methods include:

-

Label flipping

-

Backdoor insertion

-

Gradient manipulation

3. Sybil Attacks

This is where attackers create multiple fake clients to flood the system with manipulative updates. It’s like voting with dozens of fake IDs.

4. Communication Eavesdropping

Even if raw data isn’t transmitted, intercepted updates can still spill valuable clues. Worse yet, man-in-the-middle attackers can inject malicious updates directly.

5. Model Free-Riding

Picture a student who never helps with group work but still gets full credit. In federated learning, some clients might benefit from global updates without meaningfully contributing their own.

Key Techniques for Strengthening Federated Learning Model Security

Luckily, federated learning isn’t helpless. Below are some of the most widely used techniques for fortifying its defences.

1. Differential Privacy

This method adds carefully calibrated noise to gradient updates. Sure, it may slightly reduce accuracy, but it dramatically improves privacy guarantees.

2. Secure Multi-Party Computation (SMPC)

Ever tried solving a puzzle with teammates without showing each other the pieces? SMPC works similarly: the system aggregates updates without revealing any single client’s contribution.

3. Homomorphic Encryption

This magical-sounding method lets servers compute on encrypted data without decrypting it. It’s computationally heavy—but incredibly powerful.

4. Robust Aggregation Algorithms

These algorithms detect and neutralise abnormal model updates. Popular options include:

-

Krum

-

Trimmed Mean

-

Median aggregation

5. Blockchain-Based Security

Not just for crypto enthusiasts! Blockchain can provide tamper-resistant logs of client updates and aggregation steps.

Best Practices for Implementing Federated Learning Model Security

Ready to put the theory into action? Here are practical steps to build a safer, more trusted system:

✅ 1. Validate Client Updates

Run sanity checks before aggregation.

✅ 2. Encrypt Everything

From local updates to server communication—encrypt it all.

✅ 3. Monitor for Anomalies

Suspicious patterns often signal poisoning attempts.

✅ 4. Rotate Keys Frequently

Static encryption keys? Big no-no.

✅ 5. Choose Privacy-Preserving Algorithms

Balance performance with safety.

✅ 6. Limit Client Influence

Prevent any one device from over-contributing.

FAQs About Federated Learning Model Security

1. Is federated learning inherently secure?

Not inherently—though it improves privacy, it still faces multiple security risks.

2. Can attackers reverse-engineer data from model updates?

Yes, through model inversion attacks—unless proper protections like differential privacy are used.

3. Does adding security reduce model accuracy?

Sometimes, yes. But the trade-off is generally worth it.

4. Is homomorphic encryption practical in real-world federated systems?

It’s becoming more practical over time, but it’s still computationally heavy.

5. What’s the simplest way to boost federated learning model security?

Start with robust aggregation and encrypted communication channels.

Final Thoughts: Where Does Federated Learning Go From Here?

At the end of the day, federated learning isn’t just a promising technology—it’s a paradigm shift in how we think about data, privacy, and collaborative intelligence. But as cool and futuristic as it sounds, it’s not immune to threats. Without a strong Federated learning model security, the whole system can crumble in ways that aren’t always obvious until it’s too late.

The good news? With the right mix of encryption, robust algorithms, privacy-preserving tools, and good old-fashioned vigilance, federated learning can absolutely become one of the safest—and smartest—ways to train machine learning models at scale.

And who knows? With ongoing research and innovation, we might soon reach a point where distributed systems are not only more private but also more secure than any centralised approach. Now that’s a future worth looking forward to!

If you need help drafting a more technical version, a business-friendly summary, or a visual infographic, I’ve got your back!